TrackingClient Examples

These examples use TrackingClient:

For online examples, use the OICM tracking host, workspace ID, and an API key generated from OICM. The snippets below use the development host and workspace ID, but keep the API key as a placeholder so the docs do not store a secret.

1. Getting Started

This runnable example creates the getting-started-demo experiment, starts the sgd-training-demo run, logs run parameters, and logs rmse across training steps.

import time

from sklearn.datasets import load_diabetes

from sklearn.linear_model import SGDRegressor

from sklearn.metrics import mean_squared_error

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from oip_tracking_client.v2.tracking import TrackingClient

def rmse(y_true, y_pred):

return mean_squared_error(y_true, y_pred) ** 0.5

if __name__ == "__main__":

workspace_id = "7a069b40-4a3f-4352-933b-2d5624978323"

api_host = "https://oicm.oicm.my-local/api/tracking"

api_key = "YOUR_API_KEY"

tc = TrackingClient(api_host=api_host, api_key=api_key)

tc.set_experiment(

experiment_name="getting-started-demo",

workspace_id=workspace_id,

)

X, y = load_diabetes(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)

scaler = StandardScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)

epochs = 12

with tc.start_run(run_name="sgd-training-demo"):

tc.log_params({

"model": "SGDRegressor",

"dataset": "sklearn_diabetes",

"epochs": epochs,

"learning_rate": "constant",

"eta0": 0.01,

})

model = SGDRegressor(

loss="squared_error",

learning_rate="constant",

eta0=0.01,

max_iter=1,

tol=None,

warm_start=True,

random_state=42,

)

for step in range(1, epochs + 1):

model.partial_fit(X_train, y_train)

y_pred = model.predict(X_test)

test_rmse = rmse(y_test, y_pred)

tc.log_metric("rmse", test_rmse, step=step)

print(f"step={step}, rmse={test_rmse:.4f}")

# Added only for demo purposes so metric timestamps are spaced out in the UI.

time.sleep(1)

2. Diabetes Dataset Model Comparison

Use this pattern when training multiple models on the same dataset. Keep the dataset and objective in one experiment, then create one run for each model.

Experiment description:

This script compares two model runs on the diabetes dataset by logging a few key parameters and RMSE across multiple training steps. It is meant to show the basic tracking flow: create an experiment, start runs, log params, and log metrics for comparison.

from sklearn.datasets import load_diabetes

from sklearn.linear_model import SGDRegressor

from sklearn.neural_network import MLPRegressor

from sklearn.metrics import mean_squared_error

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from oip_tracking_client.v2.tracking import TrackingClient

def rmse(y_true, y_pred):

return mean_squared_error(y_true, y_pred) ** 0.5

if __name__ == "__main__":

workspace_id = "7a069b40-4a3f-4352-933b-2d5624978323"

api_host = "https://oicm.oicm.my-local/api/tracking"

api_key = "YOUR_API_KEY"

tc = TrackingClient(api_host=api_host, api_key=api_key)

tc.set_experiment(

experiment_name="diabetes-model-comparison",

workspace_id=workspace_id,

)

X, y = load_diabetes(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=42)

scaler = StandardScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)

epochs = 15

# Run 1: baseline model

with tc.start_run(run_name="sgd-baseline"):

tc.log_params({

"model": "SGDRegressor",

"epochs": epochs,

"learning_rate": 0.01,

})

model = SGDRegressor(max_iter=1, tol=None, warm_start=True, random_state=42)

for step in range(1, epochs + 1):

model.partial_fit(X_train, y_train)

pred = model.predict(X_test)

tc.log_metric("rmse", rmse(y_test, pred), step=step)

# Run 2: candidate model

with tc.start_run(run_name="mlp-candidate"):

tc.log_params({

"model": "MLPRegressor",

"epochs": epochs,

"hidden_layers": "32-16",

})

model = MLPRegressor(

hidden_layer_sizes=(32, 16),

max_iter=1,

warm_start=True,

random_state=42,

)

for step in range(1, epochs + 1):

model.fit(X_train, y_train)

pred = model.predict(X_test)

tc.log_metric("rmse", rmse(y_test, pred), step=step)

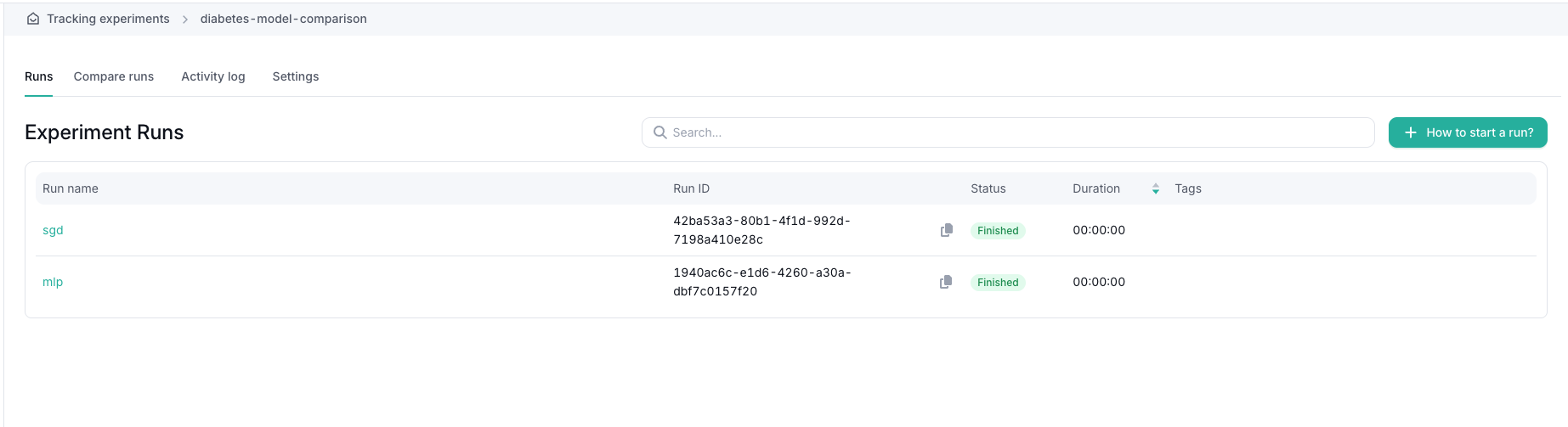

After the script runs, the diabetes-model-comparison experiment contains two runs:

sgd-baselinemlp-candidate

Use the experiment page to compare their rmse metric.

3. Addition of Tags to a Run and Experiment

Tags can be added to experiments and runs. Experiment tags help categorize experiments, while run tags help identify a specific training attempt or model candidate.

from oip_tracking_client.v2.tracking import TrackingClient

workspace_id = "7a069b40-4a3f-4352-933b-2d5624978323"

api_host = "https://oicm.oicm.my-local/api/tracking"

api_key = "YOUR_API_KEY"

tc = TrackingClient(api_host=api_host, api_key=api_key)

tc.set_experiment(

experiment_name="tagging-demo",

workspace_id=workspace_id,

)

tc.set_experiment_tags(["demo", "tracking-client"])

with tc.start_run(run_name="tagged-run", tags=["candidate"]):

tc.set_run_tags(["manual-metrics", "review"])

tc.log_param("model", "ridge")

tc.log_metric("score", 0.82)

Tags are visible in the Tracking UI and help users filter or group related work.

4. Offline Logging

Offline mode writes tracking data to a local MLflow-compatible folder instead of sending it to OICM. api_host, api_key, and workspace_id are not required in offline mode. If workspace_id is passed to set_experiment(...), it is ignored.

When params and metrics are logged locally, TrackingClient creates files under path_to_storage. The generated structure includes:

<path_to_storage>/

<experiment_id>/

meta.yaml

<run_id>/

meta.yaml

params/

model

metrics/

score

tags/

Example:

import mlflow

from oip_tracking_client.v2.tracking import TrackingClient

tc = TrackingClient(

path_to_storage="./offline-tracking-store",

offline_mode=True,

)

tc.set_experiment("offline-demo")

# In offline mode, TrackingClient configures the local store.

# Start the local run through MLflow, then log params and metrics through TrackingClient.

with mlflow.start_run(run_name="offline-run"):

tc.log_param("model", "ridge")

tc.log_metric("score", 0.82, step=1)

After running this script, the ./offline-tracking-store folder contains the experiment metadata, run metadata, parameter files, and metric files.